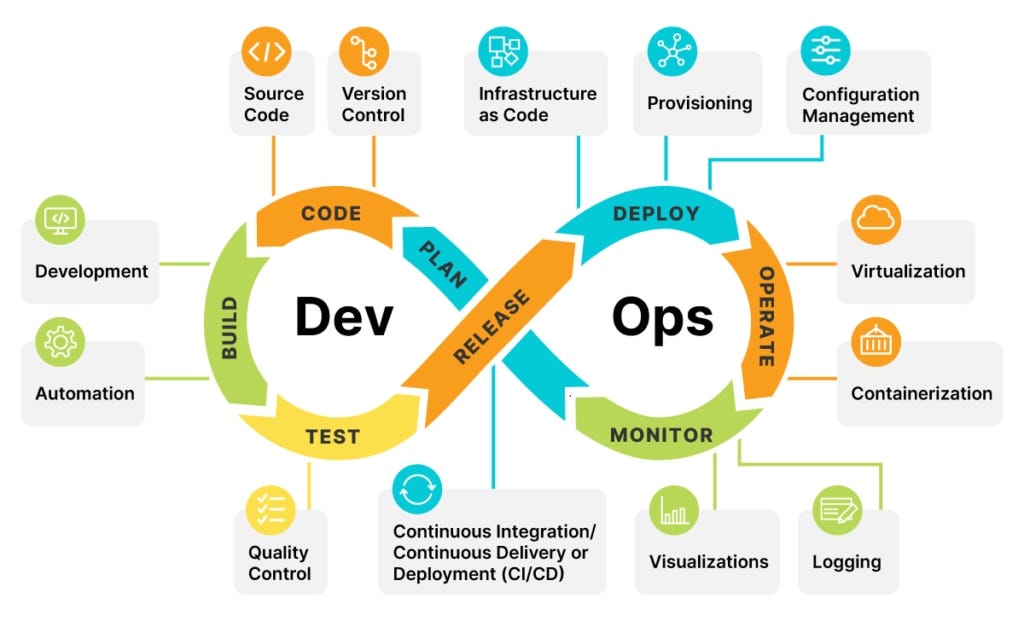

In modern SaaS environments, quality is no longer just about testing, it’s about how capable are we across the entire delivery lifecycle.

Yet many teams still struggle with a fundamental question:

“Where do we actually stand in terms of skill maturity?”

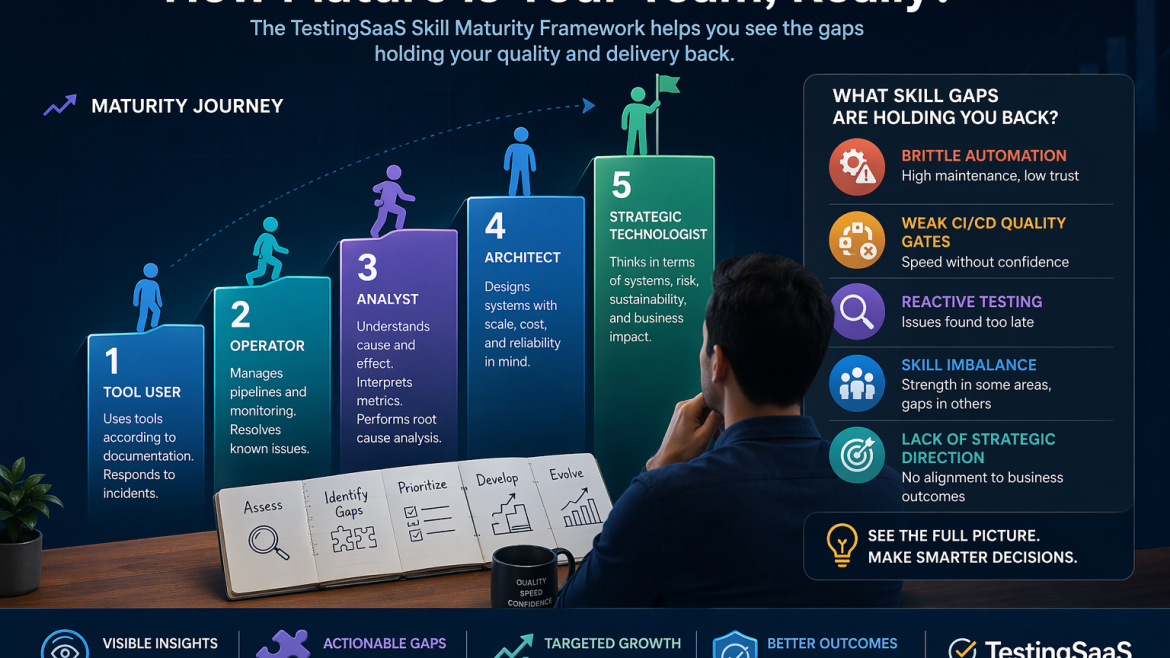

The TestingSaaS Skill Maturity Framework was designed to answer exactly that, and more importantly, to expose the gaps that are holding teams back.

The Challenge: You Can’t Fix What You Can’t See

Most organizations operate with limited visibility into their true capabilities.

You might hear things like:

- “We’re doing automation”

- “We’ve implemented CI/CD”

- “Our testing is solid”

But when you look closer:

- Automation is brittle and hard to scale

- CI/CD lacks meaningful quality gates

- Testing is reactive instead of strategic

The issue isn’t effort, no, it’s lack of a structured maturity model.

What Is the TestingSaaS Skill Maturity Framework?

The TestingSaaS Skil Maturity Framework provides a practical, real-world model for evaluating skills across modern testing and quality engineering domains (and also other!)

It breaks down capability into:

- Core skill areas (e.g., automation, exploratory testing, CI/CD, test strategy)

- Clear maturity levels (from foundational to expert)

- Observable behaviors that define each level

This allows teams to move from vague assumptions to objective, evidence-based evaluation.

How TestingSaaS Reveals Skill Maturity Gaps

1. It Defines What “Mature” Actually Looks Like

Instead of generic titles like junior or senior, TestingSaaS describes what people actually do at each level.

For example:

- Level 1 – Tool User : Uses tools according to documentation. Responds to incidents.

- Level 2 – Operator : Manages pipelines and monitoring. Resolves known issues.

- Level 3 – Analyst : Understands cause and effect. Can interpret metrics.Performs root cause analyses.

- Level 4 – Architect : Designs systems with scale, cost, and reliability in mind.

- Level 5 – Strategic Technologist : Thinks in terms of systems, risk, sustainability, and business impact.

This clarity creates a shared understanding of excellence.

2. It Enables Objective, Multi-Dimensional Assessment

The framework allows teams to assess maturity across multiple dimensions, not just roles.

A single team might be:

- Strong in automation execution

- Weak in test architecture

- Missing strategic quality leadership

By breaking skills into components, TestingSaaS highlights specific, actionable gaps.

3. It Exposes Hidden Imbalances

One of the most valuable insights the framework provides is imbalance.

For example:

- Heavy investment in tools, but low skill maturity

- Strong individual contributors, but no system-level thinking

- Advanced CI/CD pipelines, but poor test design

These imbalances are often the root cause of:

- Slow releases

- Production defects

- Scaling challenges

4. It Connects Skills Directly to Outcomes

This framework doesn’t just assess skills. It links them to real business impact.

| Skill Gap | Impact |

| Low automation maturity | High manual effort, slow feedback |

| Weak exploratory testing | Missed edge cases, production issues |

| Lack of strategy-level skills | Misaligned quality direction |

| Poor CI/CD integration | Delayed releases |

This makes it easier to justify where to invest and why.

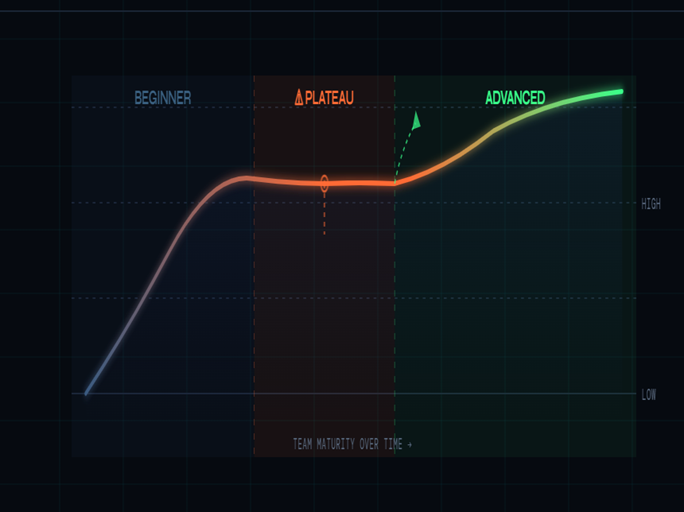

From Insight to Action

The real strength of the TestingSaaS framework is not just diagnosis, it’s direction.

Targeted Upskilling

Teams can:

- Focus on specific maturity gaps

- Build structured learning paths

- Track progress over time

Smarter Hiring

Instead of vague requirements:

“We need a senior tester”

You define:

“We need Level 3+ capability in automation architecture and CI/CD integration”

Continuous Improvement

The framework supports an ongoing cycle:

- Assess current maturity

- Identify gaps

- Prioritize high-impact areas

- Develop capabilities

- Reassess and evolve

This turns skill development into a repeatable system.

Final Thoughts

Skill gaps are inevitable. Hidden skill gaps are dangerous.

The TestingSaaS Skill Maturity Framework gives organizations the clarity to:

- See where they truly stand

- Understand what’s missing

- Take targeted, effective action

Because in a world where speed and quality define success:

Maturity isn’t optional, it’s a competitive advantage.