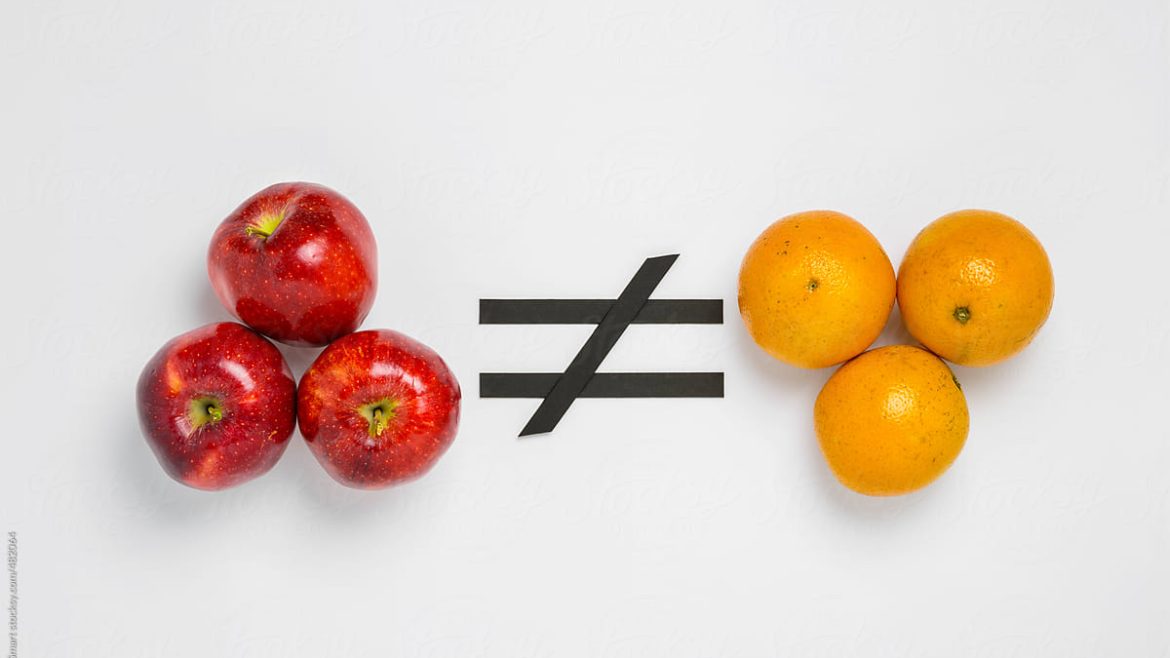

Comparing apples with oranges?

Yes, that’s what I think is going on when GreenIT professionals are comparing cloud computing vendors on their energy costs per LLM query.

Comparing apples with oranges?

Yes, that’s what I think is going on when GreenIT professionals are comparing cloud computing vendors on their energy costs per LLM query.

Last week Google published an article about how environmental impact on AI inference is measured by them. And whole LinkedIn went wild. And it was polarizing, supporters and critics falling over each other trying to shout the hardest.

But what did Google measure? That’s what TestingSaaS will find out.

Measuring environmental impact on AI inference by the cloud/AI providers

First of all, Google measured the energy costs of a single Gemini text prompt (text, not another medium, which costs a lot more energy). The study focuses on a broad look, including not only the power used by the AI chips that run models but also by all the other infrastructure needed to support that hardware like water consumption, cooling etc.

The estimation results: the median Gemini Apps text prompt uses 0.24 watt-hours (Wh) of energy, emits 0.03 grams of carbon dioxide equivalent (gCO2e), and consumes 0.26 milliliters (or about five drops) of water.

How Google did this is explained in their technical paper. It goes too far to explain all here.

To have a better undertanding of these numbers Google stated:

The Gemini Apps text prompt uses less energy than watching nine seconds of television (0.24 Wh) and consumes the equivalent of five drops of water (0.26 mL) and 0.03 grams of carbon dioxide (market estimate)

And although the Google scientists also mentioned some critical remarks in their paper and article (median, market estimate etc.) LinkedIn went in critical mode. Just query LinkedIn on ” google gemini AI energy’ and you will find enough positive and negative posts on this subject.

Mistral

Last July, Mistral AI published a full life cycle assessment (LCA):

the environmental footprint of training Mistral Large 2: as of January 2025, and after 18 months of usage, Large 2 generated the following impacts:

20,4 ktCO₂e,

281 000 m3 of water consumed,

and 660 kg Sb eq (standard unit for resource depletion).

the marginal impacts of inference, more precisely the use of their AI assistant Le Chat for a 400-token response – excluding users’ terminals:

1.14 gCO₂e,

45 mL of water,

and 0.16 mg of Sb eq.

Awesome, now we can compare the results with Google, or not?

Comparing Google and Mistral AI energy costs

That’s like comparing apples with oranges.

Why?

Just look at what is measured:

– the “marginal by prompt” (Google),

– the “total cost of the cycle” (Mistral).

What Google measured is completely different compared to Mistral.

Why is this wrong?

Well, if you compare different things it’s like comparing apples with oranges. They are completely different, so no comparison can be made.

It’s not a standard you can compare.

What to do now?

So instead of criticizing the reports of how AI energy consumption is measured by the cloud computing vendors, why not figure out together what a suitable measurement standard could be for AI energy consumption in GreenIT?

Or not Green Software Foundation?

That would make the world less polarizing as it already is.

We’re engineers, let the politics out of it!